For the last month I’ve been toiling in the wonderful world of Android widgets and figured I’d best summarize my findings in a blog post. This would be both for posterity and personal reference (in case I ever needed to build a widget again). It seems silly, but I can’t tell you the number of times that I’ve forgotten how to do something only to find the answer in my own posts.

In case you don’t know, system widgets are just depictions of app content that you can place on your home screen. Common examples include weather widgets, photo album widgets, and calendar widgets. System widgets have been available since Android 1 and in Android 1.5, developers were given APIs with which to implement their own app-accompanying widgets.

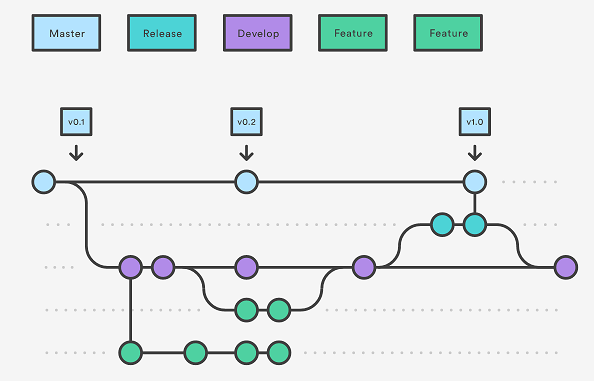

The original widgets, like all Android user interfaces (UIs), were written in xml. Nowadays its possible to use a subset of Jetpack compose-like APIs from what is known as Jetpack Glance. Jetpack Glance went from Beta to stable with its 1.1.0 release on June 12, 2024. There’s an issue tracker, but given that releases have slowed from once a month, to once a quarter, to twice a year, you might be waiting a while for your bugs to be fixed.

The point of this post isn’t to walk you through creating a widget. The documentation does that much more thoroughly than I could hope to. This post is also not intended to act as a supplement to the documentation. The Android Developers blog already did that in their excellent article, Demystifying Jetpack Glance for app widgets. Instead, here I will address the pain points that I have personally encountered while writing a fairly bespoke widget for the Dexcom Follow app.

Dexcom Follow is, surprisingly enough, an app that allows you to “follow” patients that choose to share their glucose data with you. This is a common enough scenario for parents of diabetic children or adult children of diabetic parents. Within the app we allow the follower to subscribe to updates from multiple sharers. For each sharer the Follow app displays the most recent EGV (Estimated Glucose Value), whether their glucose is currently trending up or down, as well as a trend graph displaying the users EGVs over the last 24 hours.

This is where the Follow widget comes in. Rather than forcing followers to open the app any time they want to check on a sharer, the Follow app widget allows them to view a single sharer’s last EGV and trend graph from the home screen. This last point is where the problems begin.

Jetpack glance layouts are built with the same underlying components as Jetpack Compose layouts, namely Column, Row, and Box. Columns allow you to arrange elements vertically, rows allow you to do the same horizontally, while box allows you to put elements on top of one another. Unlike Jetpack Compose however, you don’t have access to the Compose Modifier interface. Instead you have to use the much more limited GlanceModifier interface. This interface has an order of magnitude less functionality, severely curtailing what you can accomplish. If you haven’t guessed already, these restrictions include building a graph of any kind.

The workaround (because there is always a workaround) is rather clever. The approach was outlined in this r/androiddev Reddit post. While you can’t draw graphs in Jetpack Glance, you can do so pretty easily in Jetpack Compose. And while you can’t use Jetpack Compose in Widgets, you can display a screenshot of a Jetpack Compose layout in Jetpack Glance. So what the author of the post did, as seen in their gist, was render an invisible Jetpack Compose graph, of which they take screenshot, and then use that screenshot in a Jetpack Glance Image view.

The problem with their gist, unfortunately, is that it uses a lot of APIs that are considered outdated as of Android Gradle Plugin 8.6.1. Worse, it uses them in such esoteric ways that trying to copy-and-paste the gist into your Android Studio project unaltered will actually crash Intellisense, preventing you from addressing the errors in any coherent manner. In order to get around this I had to manually type the code into my editor line-by-line, addressing errors as they came up. Doing so allowed me to create the modernized functions captued in the embedded gist below:

The workaround only took me part of the way. After all, widgets are resizeable, screenshots are not. Glance composables recompose as soon as a change in size is detected, but not when a change in a flow observed through collectAsState() is triggered. Thus generation of the screenshot must occur before the screen is resized. My solution was to create a lookup table of screenshots for various widths & heights and pick the correct one based roughly on the matching widget size at the time of recomposition. This way the user can resize the widget to their heart’s content and it looks like the widget is rendering the adjustments in real-time. In reality it’s just rendering an array of pre-rendered screenshots.

All this isn’t to say that there weren’t other issues that I encountered when developing the Follow widget. These were just the most challenging and required the most outside-the-box thinking. I hope you were able to take something away from this post. If nothing else I know my future self will appreciate it!

Photo by Tim Mossholder on Unsplash

]]>